The young woman was Karen Clark, and the insurance market she entered in the mid-1980s was in a halcyon period. There had been no major earthquakes and scarcely a hurricane. Interest rates were dizzyingly high. Many companies were happily filling their books without much regard for the exposures piling up.

Some insurers, however, at least had a sense of what they did not know. One of them was Karen Clark's employer Commercial Union (CU), whose then parent company eventually became Aviva. CU had been writing a lot of US east coast property and was concerned that it was building up exposure. The way the group managed accumulations at this stage, says Karen, was very subjective. The managers would sit down together and come up with a probable maximum loss (PML) figure for each county. The figures they reached were "arbitrary."

However, they recognised the issue, and were grasping for a more sophisticated and robust way of tackling it. Karen, who was working for an internal research group within CU, thought there must be a more analytical way of approaching the problem and had the idea of using a computer based model to analyse the company's exposures and help the senior executives to make underwriting policy decisions.

At that time, computers were just beginning to become tools to support decision making, insurance companies having used them purely as data processing engines until then. Likewise, insurance actuaries had been using mathematical models for life business but the concept of the model as a management tool was a new one.

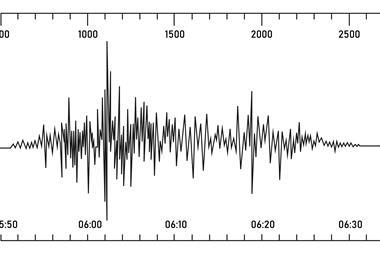

In the 1980s, the development of computing power was a critical element of this new discipline, because it allowed the insurer or reinsurer to generate thousands of possible storm scenarios to examine their possible impact on insured property.

The problem, as Karen explains, was that insurers needed good quality loss data for their standard statistical approaches to loss estimation. When it came to natural catastrophes, that information simply was not available. Catastrophes are exceptional events, the quality of historic data is questionable and the intervals between events are so long that the risk can alter significantly.

For example, the most powerful storm ever to hit the United States was the Miami hurricane of 16 September 1926, a category 4 storm. The question the insurers were asking was: what would their losses be if such an event happened now? Damages in 1926 are estimated at $6 million in actual values. Inflation alone would make the figure about $85 million at 2005 prices, but increased property values mean that today such an event could result in insured losses of $80 billion.

Karen set off to see how such models could be developed. She spent months talking with experts, reading research and collecting data from historical events to see what she could put into a model. As she points out, in many cases loss experience simply was not available. In 1986, she wrote a paper for the Casualty Actuarial Society, 'A Formal Approach to Catastrophe Risk Assessment and Management'.1

In it she describes the basis of the catastrophe model as it has remained until today, "a methodology based on Monte Carlo simulation for estimating the probability distributions of property losses from catastrophes, and discusses the uses of the probability distributions in management decision making and planning."

The paper created enough interest that, when CU disbanded its internal research unit, Karen set up her own company, Applied Insurance Research (AIR), in 1987 and produced her first model. It was a very detailed simulation, which included thousands of possible events. By her own admission, the industry did not flatten her in the rush to buy catastrophe models. "It was a struggle," she says. The industry had not suffered any really big losses, although Hurricane Hugo in 1989 had pricked their interest. By 1992, she had 35 clients.

Then came Hurricane Andrew. Eleven US property-casualty insurers failed; others who survived required large capital injections. Rates for property-catastrophe reinsurance soared, and investors saw opportunities for new companies with clean balance sheets that could take advantage of the modelling tools to write the business more scientifically from the start. Eight new specialist property catastrophe reinsurers were established in Bermuda, and the demand for modelling was established. The market at that time consisted as it does today of AIR, EQECAT and Risk Management Solutions (RMS).

Models now cover all major natural hazards, including hurricanes, earthquakes, winter storms, tornadoes, hailstorms and flood, for more than 40 countries throughout North America, the Caribbean, South America, Europe and the Asia-Pacific. Man-made perils, most specifically terrorism, are also modelled.

Decision making

In those early days, says Karen, the models were decision making tools for senior executives, who would scrutinise both the data going in and the output against their background knowledge of their company's business and challenge the way it was being used.

"The models do not produce magic numbers," she says. "After Hurricane Andrew, and as detailed data became more common, the amount of data increased, and insurance and reinsurance companies became larger groups through consolidation, the cat model was increasingly relegated to the underwriting department.

"The function became less one of decision making and more of data processing," says Karen. "Although the models produce a full exceedence probability curve, management got a couple of numbers - the 1 in 100 year event exposure and the 1 in 250 year event exposure. There is a huge disconnect between senior executives and the people who are running the models, and that is not good," she adds. Senior management is not exercising judgement over whether the data and the model output are consistent with the reality of the business.

Karen puts some of the criticism of cat models following Hurricane Katrina in 2005 down to this relegation of cat models to data processing. A Hurricane Katrina scenario, she argues, was certainly in AIR's catalogue of events, along with much bigger events since Katrina was only classed at a 1 in 30 year probability. "It was by no means a worst case scenario," she says, "We were surprised that they were surprised."

She is also somewhat critical of the way rating agencies have used models, by focussing on the 1 in 100 and 1 in 250 year events. "You could have scenarios which are two to three times as large as a 1 in 250 year event, and they are not looking at that." To put it another way, there are some really big events out on the tail of the probability curve. Their likelihood may be less than 0.4% a year, but they are nonetheless possible and could have a devastating effect on a company's balance sheet.

Karen points out that Florida has more than $2 trillion of property. A category 5 hurricane could result in $200 billion losses for property and business interruption alone. In the case of a major earthquake, the suddenness of the event is likely not just to mean major property damage but also an impact on other classes of business, such as workers' compensation.

Weaknesses

At the same time, Karen explains that following the 2005 hurricanes, it was clear that insurers had underestimated the cost of replacement, particularly for commercial buildings. Models are always a work in progress, being refined on the basis of experience, Karen comments. "We also learned that certain types of construction, particularly light metal buildings, are more vulnerable than we had previously assumed, and we have made adjustments."

She points out that the models are always subject to fine tuning but the changes are incremental. What causes modellers to change their models? "There are hundreds, no, thousands of scientists working on hurricanes, earthquakes and other events, but they have been doing that for decades. Even when we started we had a pretty good understanding of how these events caused damage. Nothing has happened fundamentally to change our view of the hazard. Scientific advance is gradual. It happens over time, so a radical change in our view of risk does not happen. Fine tuning after events is all that is needed."

The refinements to the models in response to the 2005 hurricanes have given underwriters more confidence and perhaps more reasoned expectations of their value. Certainly, modelling underpins the record issuance of catastrophe bonds in 2006. It was AIR's busiest year so far in terms of supporting capital markets' cat risk structures.

While there are still fresh markets in the insurance industry for catastrophe models, Karen also sees wider applications. For instance, interest is definitely picking up among mortgage lenders, who are beginning to realise the potential impact of a mega-catastrophe on their portfolios. On the corporate side, companies are looking at how they can make use of such powerful tools. They may want an independent view of their exposure to use for their risk management.

Contingent business interruption is another area where AIR is working. Says Karen, "A lot of companies are concerned about their business interruption exposures. They are interested in what disruption they could have, even if their own plant does not suffer damage, because in a mega-catastrophe, that becomes a higher probability."

Finally, there is the wider potential role for catastrophe modelling. Early in 2006, Karen became a member of a high level advisory board on natural catastrophes set up by the Organisation for Economic Co-operation and Development (OECD), and she believes modelling could support its work.

"Models do not have to be used for insurance," she points out. The models first produce property damage estimates, and then calculate insured and reinsured losses. "I believe there could be greater use made of models to quantify the benefits of mitigation," Karen argues. "Models can show what the most cost effective forms of mitigation are."

With this comment, Karen returns to her theme that no matter how powerful they are, catastrophe models are not answer producers but decision making tools for management.

- Lee Coppack is the editor of Catastrophe Risk Management.

Lee.coppack@cat-risk.com

www.cat-risk.com