Over estimating catastrophe risks is as bad as underestimating them, as both create additional costs. We must understand risk better than our competitors to grow, decrease costs and maximise returns. By Gero Michel

Risk intelligence is our ability compared to competitors to assess a risk, according to David Apgar, the author of the 2006 book Risk Intelligence: Learning to Manage What We Don't Know. He argues that risk essentially falls into two categories: knowable and, therefore, learnable, and random or unknowable and so difficult to anticipate. Risk intelligence, therefore, depends on information advantages and how they are applied.

By differentiating between knowable and random risks, we can apply our efforts to learnable risks and, hence, minimise risk-related costs. In insurance and reinsurance terms, when we do this better than our competitors, we use our capital more efficiently.

Proprietary catastrophe exposure models released by commercial vendors have become a major part of our underwriting process. They enable underwriters to quantify risk technically and consistently analyse aggregates at all relevant resolution levels, including for territories or perils which they might know little about. Related uncertainty is large but loosely understood, and difficult to incorporate in risk management.

These vendor models are driving expected loss views and the calculation of capital cost. Yet, they are “black boxes” to most of us, as we often feel that we do not sufficiently understand the underlying equations and scientific background that determine model results. What value should we, therefore, place on vendor modeling in terms of risk intelligence? This article aims to provide insights on model background and considers topics that might help in differentiating results from different vendor models.

Exposure underwriting considers future risks and focuses on future trends in exposure, as opposed to past losses. Pricing stands on its own for discrete periods. Underwriting is done at price to exposure rates, rather than based on the assumption that losses will determine premiums over time.

These models do offer advantages over pricing based purely on experience, including:

• Filling in historical “loss voids” in both severity and frequency ranges.

• Relating changes in exposure to changes in risk.

• Allowing analysis at high resolution.

• Providing the ability to relate hazard intensities to losses, so enabling us to forecast losses for actual events.

• Helping to combine any number of event-loss curves for any number of available territories/perils to provide consistent as well as incremental portfolio risk measures and related capital costs.

• Consider changes in climate or any other conditions if deemed appropriate for the near future

Exposure models however are not able to:

• Consider the “unknown”.

• Increase certainty materially about natural catastrophic losses.

• Allow differentiates between skills of different models.

• Make predictions about likelihood of losses.

Penetration of vendor models in the industry is high, since broking and underwriting of catastrophe contracts have become increasingly subject to their results. Differentiation in expected losses is now done at sub-percentage levels. Market prices for certain territories and perils appear to be converging at narrower ranges than ever before, making the catastrophe risk product increasingly commoditised.

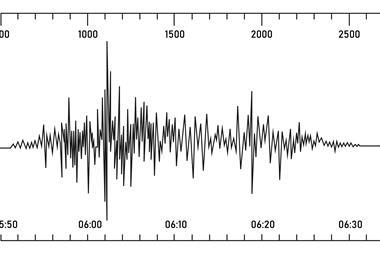

Narrow ranges in risk results are confusing for those of us who have worked in related scientific research. This is because, forecasting quality of individual components of current models is small, and model results might diverge from actual losses by more than one order of magnitude at three sigma levels, for example, for vulnerability. Historical experience is limited and scientists question actualistic theory, which argues that findings from the past suffice to forecast the future. Climate change and unproven and insufficiently understood physical models are additional factors that bring cat models’ forecasting into question.

Does the market overestimate the accuracy of models?

Probably yes! Underwriters often do not consider uncertainty and possible outcome ranges, instead concentrating largely on single, nutshell mean values. Part of the uncertainty inherent in models is only loosely understood. This includes activity rate uncertainty and the correlation of uncertainty or the uncertainty related to the dis-aggregation of hazard. In addition, models weight certain subjective scientific views more than others. Underwriters do not, or only loosely, consider epistemic uncertainty, and overall risk assessment skills of models are loosely, if at all, quantified.

If uncertainty is, indeed, large, why do models create mean loss forecasts that are taken to reflect actual event losses accurately, and why have model results for recent losses been within deemed relatively narrow loss forecast ranges with respect to the uncertainties involved?

As vendor models provide results for tail or extreme risk, rather than frequency, forecasting skill is admittedly difficult to quantify. Results are viewed as dependent on market opinion of return period loss. Vendor models are calibrated at various levels, including a final overall calibration that combines primary input data with overall output results. This means that vendor models tend to erase offsets between individual components in the risk formula and adjust final model results to expected outcome. This overall calibration procedure has costs. They include:

1. Model risk. This risk is related to parameter assumptions as well as the art of modeling which includes managing loss results to loss expectations thereby narrowing possible ranges of loss outcome.

2. Physical forecasting tools. The use of models for physical forecasting of actual losses is restricted and can be misleading as the relationship between hazard intensities and losses changes in the overall calibration process.

3. Offsets in risk results due to incomplete exposure data used for model calibration. Offsets in overall exposure assumed by vendors to be in place and those actually at risk, will result in offsets for loss results from client portfolios for certain territories and/or perils and can result in significant additional offsets in loss forecasts for different model generations.

4. Comparison of territories. Model results become difficult to compare for different territories and/or perils, as the models are created with local rather than global data, and territory-specific models are calibrated independently of each other.

5. Assumption of data completeness and consistency. Vendor exposure models assume completeness and accuracy of exposure input and do not consider the related uncertainty. They also assume consistent inter-company claims management, underwriting discipline and/or payback mentality. This means that additional quality of risk measures and differences in primary risk handling are not coded into the models.

Discernment

There is no single and simple solution that enables us to appreciate uncertainty fully, to discern which model is best for which territory or peril, or to alter model results to make them comparable within an underwriting portfolio.

The following are possible ways to value and differentiate various results from vendor models:

• Consider ranges in results, rather than mean losses, and run more than one exposure model and/or run several versions of one exposure model at the same time. You might find that changes in exposure model results from various versions of the same model create additional ranges in volatility similar in size to the volatility inherent in the model for different territories.

• Compare completeness of industry exposure data sets published by vendors to your own view of the market; vendor industry exposure that exceeds your estimate suggests that the vendor is assuming higher risk results and vice versa.

• Check recent historical losses. You might find that certain accounts or portfolios do better consistently. This can be due to local or regional inefficiencies of models, incomplete exposure data or better than average claims handling. Studies following the 2007 windstorm Kyrill losses in central Europe, for example, suggest that differences in claims handling could have a larger effect than regional differences in hazard.

• Be careful with vendor loss results in the high frequency range. Check historical losses for the frequency range and consider fitting frequency-severity distributions to the inflated loss experience for your assessment of frequency risk. Consider that proxies are needed in the models to assess high frequency risk, as the available number of model events might be less than the actual number needed to accurately reflect the risk at high resolution levels. You might want to assume that there might be little consistency in the accuracy of actual vs. theoretical between high frequency and severity losses.

• Scrutinise any new model or new model version. Are results consistent with loss experience? Why are model results different from those of your actuarial in-house assessment?

• Check results for “aggregate” as well as “detailed” model output for reinsurance. It is nice to have detailed information, but it does not necessarily mean better results. Check differences in results; do you understand why the detailed results are higher or lower?

• Verify exposure data for reinsurance treaties. Higher resolution does not necessarily mean increased completeness in data. Check premium, number of risks, and change in portfolio composition etc., to test data completeness. No single exposure data set is complete nor is exposure without uncertainty. Stress testing exposure data sets by scrutinising limits, coding and data completeness is helpful. Exposure uncertainty might not be the largest part of the uncertainty inherent in the model, but it is the easiest to control.

• Examine differences in territory risk along or parallel to hazard gradients that are deemed consistent, such as distance to cost. Are there unexplained voids in the model and/or do certain models predict a difference in risk penetration away from the coast/fault or other focus of hazard?

• Compare model results for comparable territories. How obvious are the differences given the variations in exposure, vulnerability or hazard?

• Consider published, scientific industry damage curves, value at risk (VAR) or tail value at risk (TVAR) results and how they relate to the vendor model results.

Finally, educate your company about the uncertainty in modeling and the dangers of blind loaded or unloaded use of models for pricing and the assessment of company risk. Consider that the use of the same model for technical pricing as well as aggregate/probable maximum loss (PML) assessment might guide the company into a dangerous spot where certain model-related shortcomings evolve into dangerous feedbacks that could lead to unexpectedly high concentration of risk.

Various universities, science centers and scientific networks have recently been taking an interest in exposure modeling and cat risk. We might want to work with these organisations to further differentiate our view of risk beyond the common use of vendor models.

How much skill is available for a risk forecast below five years and can we leverage existing results for side-perils and modelling on a more consistent global basis? We might find that risk for some territories and perils can be understood better than others because risk changes significantly over the years and is determined by certain short term conditions that might be learnable.

We should consider that further commoditisation of cat risk products is not desirable, especially in a globally softening market, because this results in narrow price ranges at thin margins, pressure on vendors to reconsider more conservative estimates and a dangerous belief that we are safe as long as we consider risk within the margins of a vendor tool.

Differentiating our understanding of risk can help us to distinguish ourselves from others in the market, minimize volatility and add longer term value for our clients where others cannot.

Postscript

Gero Michel is senior vice president, international property catastrophe team lead, Endurance Specialty Insurance.

gmichel@endurance.bm

www.endurance.bm

No comments yet